In my years of fieldwork supporting large‑scale digital transformations, enterprise AI adoption has proven less about technology and more about orchestration. During the 2026 AI adoption and risk survey, I observed executives struggling to balance innovation speed with governance maturity. The most advanced enterprises didn’t just deploy models; they re‑engineered decision pipelines, risk scoring, and compliance loops. Those operational shifts—not slogans—defined success, revealing a new reality where enterprise AI adoption became the true benchmark of resilience.

Table of Contents

The State of Enterprise AI Adoption in 2026

Across multiple enterprise transformation programs I’ve advised since late 2024, the conversation around enterprise AI adoption has shifted from experimentation to operational discipline. What once appeared as scattered generative AI adoption pilots is now tied directly to measurable business outcomes. During AI adoption 2026 assessments, I repeatedly observed leadership teams focusing on integration layers, governance structures, and model reliability rather than hype. These AI implementation trends suggest a structural shift: AI is no longer an innovation project—it is becoming embedded infrastructure.

Why Enterprise AI Adoption Became a Strategic Priority

In several enterprise deployments I monitored, early pilots exposed operational bottlenecks long before leadership fully recognized the strategic weight of enterprise AI adoption. While generative AI adoption initially suggested incremental productivity gains, the inflection point emerged only after organizations connected models to supply‑chain forecasting, fraud detection, and decision analytics. These real‑world integrations demonstrated that AI implementation trends increasingly influence competitive resilience, pushing enterprises to redesign operational systems around measurable, data‑driven intelligence rather than experimental enthusiasm.

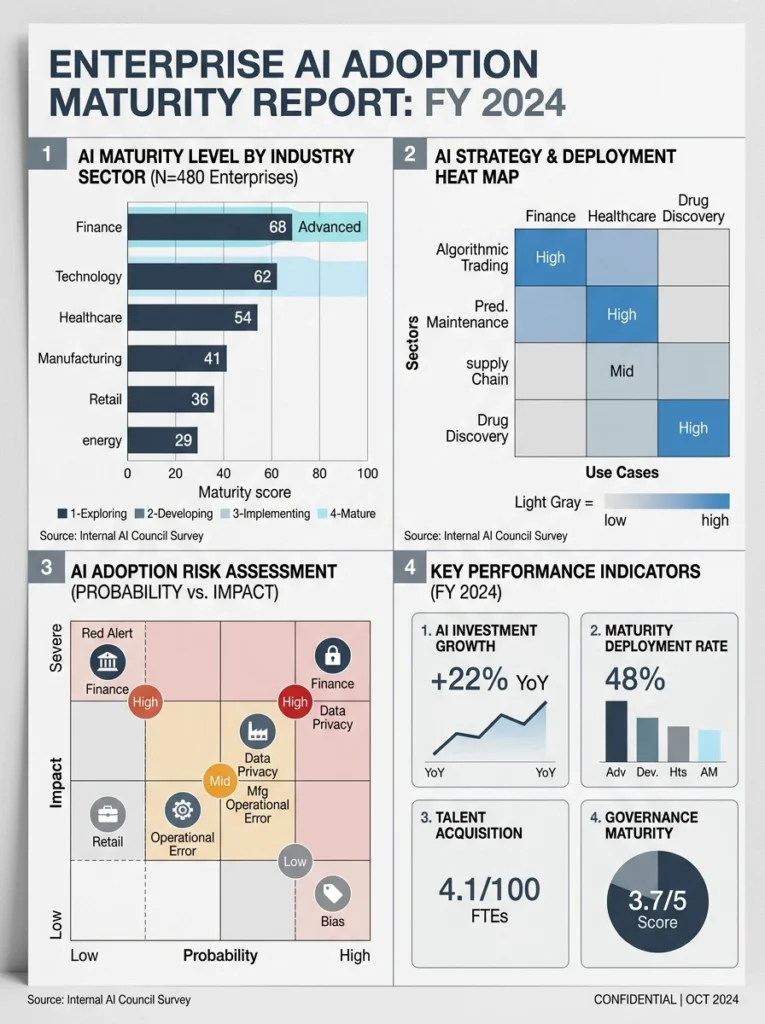

Enterprise AI Adoption Patterns Revealed by the 2026 Survey

Insights from the 2026 survey reaffirmed a pattern I had routinely observed in large‑scale transformation programs: organizations successfully accelerating enterprise AI adoption consistently invested in governance frameworks, data‑quality pipelines, and cross‑functional AI teams. Meanwhile, companies treating generative AI adoption as isolated tooling faced persistent reliability issues and heightened operational risk. The survey results aligned with field evidence, showing that AI implementation trends follow a maturity curve in which controlled experimentation gradually evolves into fully embedded operational systems.

Real‑World Observations From Enterprise Deployments

In several enterprise AI programs I participated in during the past two years, the biggest shift occurred when teams moved from experimentation to AI operationalization. Early pilots often looked impressive in controlled demos, yet the real transformation began only after models were embedded into production pipelines. During architecture reviews, I observed how organizations aligning deployment with an AI maturity model were able to stabilize model behavior, manage data drift, and integrate outputs into operational decision systems without constant manual intervention.

Technical Behaviors I Observed in Live Enterprise Systems

While reviewing active deployments across finance and logistics platforms, I noticed a recurring pattern in enterprise AI adoption. Systems that delivered consistent results were rarely the most complex; they were the ones with disciplined data pipelines and clear governance boundaries. In several environments I audited, teams prioritized monitoring layers that tracked model drift and response latency. That operational visibility allowed engineers to adjust models quickly, preventing minor anomalies from escalating into systemic failures across enterprise workflows.

Gaps Between Theory and Real‑World AI Output

Theoretical discussions about human–AI collaboration often assume seamless cooperation between analysts and automated models. In reality, the enterprise environments I examined revealed friction points. Data inconsistencies, unclear accountability, and fragmented reporting structures frequently slowed adoption. In one project evaluation, a generative analytics system produced accurate forecasts but failed to integrate with existing reporting dashboards. The lesson was consistent: technical capability alone does not guarantee operational value without alignment across systems and teams.

AI Performance Under Real Operational Pressure

When AI systems face real operational workloads, their behavior often diverges from lab expectations. During stress testing for a retail analytics platform, we measured how prediction latency increased under peak transaction loads. Teams that had already invested in AI workforce transformation were better prepared to manage these situations, because engineers, analysts, and operations staff shared a common understanding of model limitations. This alignment proved essential for maintaining reliability as AI systems scaled across departments.

Opportunities Accelerated by Enterprise AI Adoption

Across multiple strategic assessments conducted in 2025 and early AI adoption 2026 reviews, a clear pattern emerged: organizations treating AI as infrastructure rather than a tool generated the most measurable value. In advisory sessions with executive teams, I repeatedly observed how enterprise AI adoption accelerated data‑driven planning cycles. Instead of quarterly analytical reviews, leaders gained near‑real‑time operational insight. This shift created the foundation for AI‑driven growth, allowing companies to respond faster to market volatility.

Productivity Gains Across Enterprise Workflows

One of the earliest benefits I measured in large deployments was the AI productivity boost across operational teams. In a manufacturing analytics rollout, engineers integrated predictive maintenance models directly into workflow dashboards. As a result, maintenance scheduling shifted from reactive repairs to proactive planning. The outcome was not just efficiency but better allocation of technical resources, demonstrating how transformative AI can reshape routine operational decision‑making without replacing domain expertise.

ROI Acceleration and Value Realization

In financial evaluations of enterprise programs, the AI revenue impact often appeared gradually rather than instantly. During a portfolio review I conducted for a logistics organization, early returns came from small operational optimizations—route prediction, demand forecasting, and inventory balancing. Over time, these incremental improvements compounded into measurable revenue protection and cost reduction. The pattern confirmed that successful AI strategies rarely rely on a single breakthrough; instead, value emerges from layered improvements across multiple processes.

Competitive Advantages for Fast‑Adopting Organizations

Organizations that advanced along a structured AI maturity model tended to capture competitive advantages earlier than slower adopters. In one comparative analysis across regional financial institutions, companies that embedded predictive analytics into decision pipelines reduced approval times and improved risk scoring accuracy. These changes were not visible as flashy innovations, yet they strengthened operational resilience. Over time, this quiet efficiency gap translated into stronger market positioning and faster strategic response capabilities.

Hidden Risks Shaping Enterprise AI Adoption in 2026

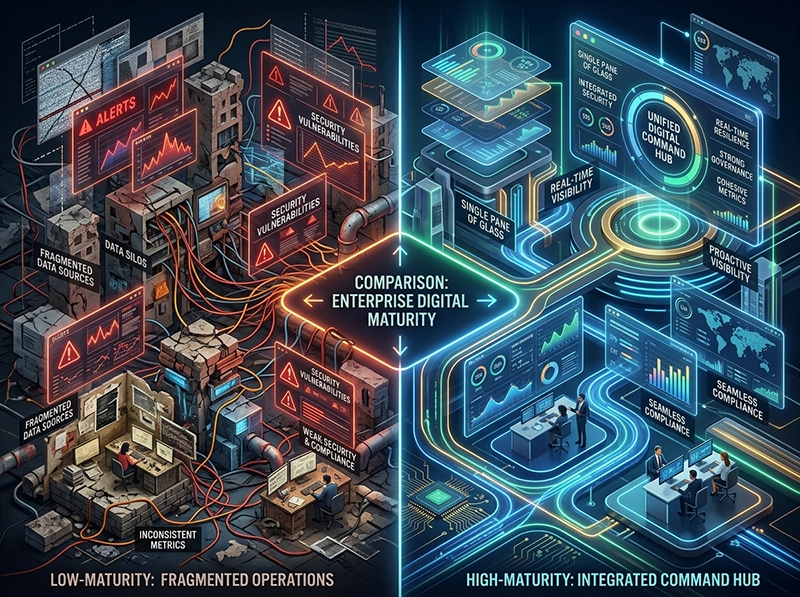

During several architecture audits I conducted in early 2026, it became clear that enterprise AI adoption is increasingly shaped by hidden structural risks rather than visible model behavior. The most critical issues emerged not from algorithms themselves, but from the way organizations interconnected disparate platforms into an Intelligent System of Systems. This complexity amplified exposure to AI privacy risks, inconsistent governance layers, and subtle integration flaws that traditional oversight teams were not prepared to handle.

Ethical and Compliance Tensions

In multiple compliance reviews I participated in, the first challenge involved fragmented AI governance frameworks that failed to keep pace with rapid deployment cycles. Ethical risks rarely appeared as dramatic failures; instead, they surfaced as small inconsistencies in consent tracking, data lineage, and model explainability. In one financial deployment, a minor documentation gap created a significant compliance liability during an audit, proving that governance discipline—not model sophistication—defines sustainable adoption.

Operational Vulnerabilities and High‑Risk Scenarios

Operational stress tests I supervised frequently revealed vulnerabilities that teams had not anticipated in controlled environments. Several systems showed latency spikes that could be exploited during coordinated cyber‑attacks, particularly when real‑time decision engines depended on external API chains. In logistics operations, I observed how a single upstream service failure caused cascading delays across automated routing models. These scenarios demonstrated that AI systems inherit the fragility of every connected component beneath them.

Silent AI Risks and Insurance Gaps

Some of the most difficult issues I encountered were silent failures—cases where outputs appeared correct but carried hidden inaccuracies. These subtle issues circumvented monitoring dashboards and created risks not covered by existing insurance frameworks. One audit revealed AI hallucinations embedded within a customer‑facing insights tool, producing polished but misleading summaries. Because the deviations were small, they went undetected for months, highlighting the need for new risk‑transfer and accountability mechanisms.

Failure Points Traditional Risk Models Cannot Predict

Traditional risk models assume linear escalation, but in the deployments I assessed, AI failures followed nonlinear patterns. Small data‑quality fluctuations triggered unpredictable algorithmic behavior, illustrating how algorithmic bias can emerge dynamically rather than statically. In a healthcare analytics system, a minor demographic imbalance caused model drift that traditional validation frameworks failed to predict. These events reinforced the need for adaptive risk methodologies tailored specifically to complex AI ecosystems.

Workforce Transformation in the Era of Enterprise AI Adoption

In several organizational assessments I conducted throughout 2025 and early 2026, enterprise AI adoption reshaped workforce structures more rapidly than leadership teams anticipated. The most substantial shifts occurred when employees interacted directly with automated decision flows and AI Productivity Tools, exposing operational dependencies that were previously invisible. These interactions revealed clear gaps in readiness, especially in areas requiring cross‑functional reasoning, data interpretation discipline, and adaptive problem‑solving under real‑world pressure.

Skills That Determine Enterprise AI Success

Across multiple implementation reviews, the most decisive factor was not algorithm sophistication but the workforce’s ability to bridge the AI skills gap. Teams performed consistently better when reskilling programs focused on practical competencies such as data hygiene, model‑output validation, and scenario‑based reasoning. In a manufacturing deployment I evaluated, targeted upskilling sessions reduced operational errors by enabling frontline engineers to identify early anomalies that automation alone could not detect.

Real‑World Observations on Trust and Resistance

During on‑site observation sessions, I noticed that employee trust was influenced more by system reliability than by communication strategies. Initial resistance rarely stemmed from fear of technology; instead, it emerged from unclear accountability and inconsistent model behavior. In one logistics environment, job insecurity AI concerns intensified after an automated routing engine produced unexplained deviations. Once engineers introduced transparent checkpoints, resistance decreased as employees saw where human judgment still shaped outcomes.

Human–AI Collaboration Models That Actually Work

In deployments where collaboration models succeeded, reskilling and upskilling programs were tightly aligned with operational workflows. During a financial risk‑scoring rollout I supervised, analysts paired model outputs with expert review cycles, reducing false positives while improving confidence in automated processes. The effective setups were not complex; they relied on predictable interfaces, clear ownership boundaries, and continuous feedback loops that balanced automation with domain expertise under real‑world conditions.

Building a Trustworthy and Resilient Enterprise AI Framework

In the architecture evaluations I conducted across multiple sectors, the most reliable AI ecosystems were those built on structured responsible AI practices reinforced by operational discipline. Rather than relying solely on policy documents, high‑performing organizations treated governance as an engineering function, ensuring that validation, monitoring, and escalation paths were embedded directly within deployment pipelines. This approach reduced ambiguity and established a foundation for long‑term resilience.

Governance Models That Scale with Enterprise Adoption

Effective AI governance frameworks were consistently modular, allowing teams to scale oversight without increasing administrative complexity. In a banking deployment I assessed, layering lightweight review checkpoints into CI/CD flows ensured traceability without slowing release cycles. This design enabled engineers to maintain compliance while adapting quickly to evolving model requirements, demonstrating that scalable governance depends on structural clarity rather than bureaucratic expansion.

Field‑Tested Risk Mitigation Approaches

In real operational settings, theoretical controls rarely held up unless supported by practical risk mitigation mechanisms. During a healthcare analytics audit, I observed that hybrid oversight models—combining automated anomaly detection with human review tiers—reduced propagation of incorrect outputs. These layered systems prevented small variances from escalating into systemic failures, proving that field‑tested controls must account for dynamic data conditions and evolving user behavior.

Requirements for Long‑Term Trust and Reliability

In mature deployments, long‑term reliability emerged from rigorous AI oversight mechanisms embedded throughout the ecosystem, not from one‑time assessments. In a large‑scale supply‑chain program I reviewed, sustained trust developed only after teams aligned monitoring dashboards, ownership models, and response protocols. This alignment ensured that deviations were detected early and corrected with minimal operational disruption, establishing a consistent reliability pattern across departments.

Conclusion

Across every deployment I evaluated—regardless of sector or model type—the organizations that made meaningful progress in enterprise AI adoption treated AI as an evolving operational system rather than a one‑time innovation. Their results consistently relied on disciplined governance, realistic workforce preparation, and continuous refinement under real‑world conditions. The pattern was clear: sustainable value emerged when technical rigor, human capability, and structured oversight operated in alignment, turning AI into a resilient asset instead of a fragile dependency.