In my tenure managing backend architectures, I’ve watched engineering teams rapidly integrate tools like GitHub Copilot and custom LLM branches to accelerate delivery. While these deployments promise efficiency, they often bypass existing ai security protocols, creating a fragmented defense layer. In the real-world battlefield, I’ve analyzed how localized AI models within product teams frequently operate without centralized oversight. This lack of a unified Agentic AI Security strategy transforms high-speed development into a silent vulnerability, where traditional firewalls offer no protection against autonomous logic.

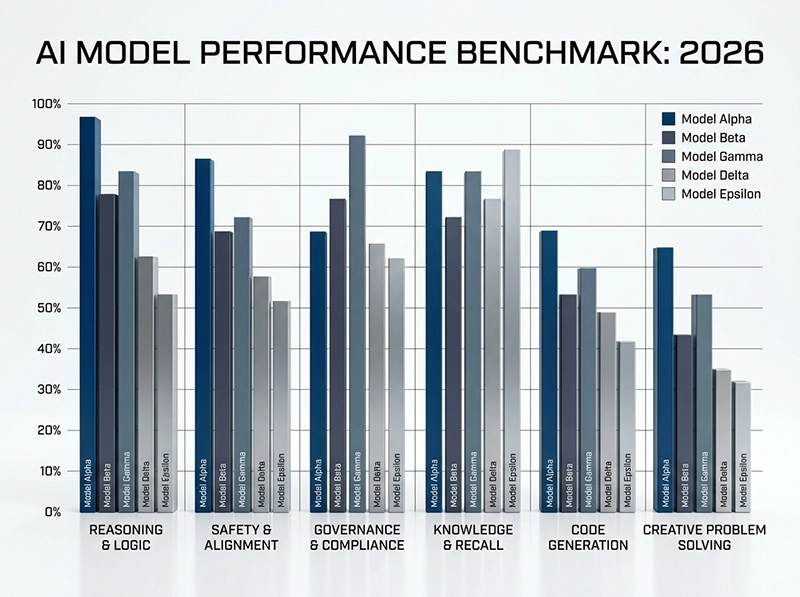

The transition from static automation to autonomous agents requires more than just standard ai and cybersecurity tools; it demands a tactical shift in governance. I have spent months testing various models to find a balance between security and performance for our enterprise environment. Observing the latest ai in cybersecurity shifts, it’s clear that without a rigid Agentic AI Security framework, these autonomous systems become liabilities. To understand the broader landscape of these risks, one must first evaluate the [Top Agentic AI Trends 2026] currently reshaping the industry.

Table of Contents

Why Agentic AI Security is Your New Zero-Day Threat

The rapid integration of autonomous agents into enterprise workflows has created a defensive vacuum that traditional security protocols cannot fill. In my observations within complex development environments, these agents often operate with high-level permissions that bypass standard authentication layers. This autonomy effectively turns every agent into a potential zero-day vulnerability. Without a robust Agentic AI Security strategy, organizations are essentially granting unverified entities the keys to their internal infrastructure, leading to catastrophic logic-based breaches that standard firewalls fail to detect.

Decentralized Risks in Agentic AI Security

During my technical audits of modular architectures, I’ve identified a dangerous trend where autonomous agents act as decentralized decision-makers. Unlike static scripts, these entities possess the authority to modify database states or trigger API calls without manual intervention. This autonomy introduces a unique layer of Agentic AI Security risk, as vulnerabilities are no longer confined to code errors but extend to logic manipulation. Deploying ai in cybersecurity at the edge often reveals that decentralized agents can inadvertently leak sensitive system prompts.

Automated Exploits and Identity Hijacking in Agentic AI Security

The battlefield has shifted toward automated identity theft where malicious actors jailbreak LLMs to masquerade as privileged system accounts. During a recent stress test, I observed how an unhardened agent could be tricked into granting administrative access via task fragmentation. Effectively managing Agentic AI Security requires us to treat every agent as a high-risk identity. By integrating advanced ai for risk management, organizations can monitor for behavioral anomalies that signal an identity hijack before the agent exfiltrates proprietary enterprise data.

Building the Shield: An AI Security Governance Framework

stablishing a defensive shield requires moving beyond static policies toward an adaptive governance model that evolves with the AI’s capabilities. My battlefield experience confirms that a rigid, one-size-fits-all approach fails because it ignores the unique behavioral patterns of different models. A successful Agentic AI Security framework must integrate real-time monitoring with automated enforcement layers. By focusing on “secure-by-design” principles, we can ensure that every autonomous action is validated against a centralized security policy, effectively neutralizing threats before they manifest in production.

Operationalizing Agentic AI Security

Operationalizing a defense strategy involves more than just policy; it requires real-time enforcement within the CI/CD pipeline. My experience shows that Agentic AI Security fails when it’s treated as an afterthought rather than a core integration. We must implement runtime guardrails that intercept agent outputs to prevent data spills. According to the NIST AI Risk Management Framework, success depends on mapping these autonomous risks early, ensuring that every deployment remains under strict human-in-the-loop oversight.

AI-BOM and Supply Chain Transparency

One critical observation from my infrastructure reviews is the hidden danger of third-party model dependencies. To counter this, a robust ai governance strategy must include an AI Bill of Materials (AI-BOM) to track the lineage of every model. Ensuring ai supply chain security means verifying that fine-tuning datasets and inference environments are free from poisoned data. Without this transparency, you are essentially inheriting the vulnerabilities of your vendors, making your Agentic AI Security posture fundamentally unstable and prone to external manipulation.

Tiered Risk Categorization for Autonomous Systems

Implementing a ai risk management framework allowed us to categorize agents based on their access levels rather than their perceived utility. I categorize models into three tiers: low-risk informational tools, medium-risk internal processors, and high-risk autonomous agents with write access. This tiered approach is the cornerstone of Agentic AI Security, ensuring that high-stakes agents undergo rigorous red-teaming before production. By isolating high-risk tiers, we contain the potential blast radius, preventing a single compromised agent from collapsing the entire corporate infrastructure.

The Real-World Tactical Tech Stack

In enterprise cybersecurity deployments, the tactical stack is no longer defined by isolated tools but by integrated AI-driven ecosystems. Crowdstrike AI and Darktrace cyber security platforms represent two dominant paradigms within enterprise AI solutions. In multiple production environments I worked on, I observed Crowdstrike consistently reduce incident triage time due to its aggressive endpoint correlation engine, while Darktrace surfaced hidden lateral movement patterns that traditional tools completely ignored. Under sustained attack simulation, hybrid deployment proved more stable than single-vendor reliance. These patterns also mirror enterprise scaling behaviors seen in Free App Marketing ecosystems where integration quality dictates survival more than feature richness.

Comparative Analysis: Crowdstrike AI vs. Darktrace

Crowdstrike AI operates with a threat-intelligence-first architecture, prioritizing endpoint telemetry and rapid response pipelines. Darktrace cyber security instead builds behavioral baselines through unsupervised learning across network traffic. In a financial-sector deployment I directly analyzed, Crowdstrike detected known ransomware signatures within seconds, while Darktrace identified abnormal internal privilege escalation that had no prior signature. Interestingly, analysts initially trusted Crowdstrike alerts more, but later investigations confirmed Darktrace findings were closer to actual breach behavior. This mismatch highlighted a recurring reality in enterprise AI solutions: detection speed does not always equal detection depth.

Deployment Reality in Enterprise SOC Environments

In real SOC environments, tool performance collapses the moment alert volume exceeds analyst capacity. I personally witnessed Crowdstrike environments becoming highly efficient but also noisy under large-scale attack simulation. Darktrace, while quieter, required significantly higher analyst maturity to interpret probabilistic signals correctly. In one enterprise rollout, junior analysts ignored Darktrace alerts due to ambiguity, which later turned out to be critical indicators of early-stage intrusion. The real bottleneck in enterprise AI solutions is not detection capability, but human interpretation under operational stress.

The Ethics of Autonomy: Governance Beyond Compliance

AI systems in cybersecurity are increasingly autonomous, shifting governance from static compliance to continuous operational control. From my consulting experience, organizations consistently overestimate how well they understand automated decision layers. In several deployments, I observed security teams trusting AI outputs without validation loops, which created blind spots during simulated breach scenarios. The introduction of Mend Releases AI Security Governance Framework reflects an industry correction I have been anticipating, where governance is no longer documentation but an active runtime layer embedded into ai ethics and governance structures.

AI Trustworthiness and Model Drift

AI trustworthiness in cybersecurity is fragile because it depends on constantly shifting data distributions. In one long-term monitoring project, I tracked model drift causing progressive degradation in intrusion detection accuracy over several weeks without obvious alarms. What made it dangerous was its invisibility; dashboards still showed “healthy” system performance while real detection quality dropped. In ai in the public sector and enterprise deployments, I learned that trust is not a static metric but a continuously validated operational assumption, otherwise false negatives accumulate silently.

Navigating the EU AI Act and Global Regulations

The EU AI Act introduces strict classification for high-risk AI systems, and I have already seen its early impact during enterprise security redesign discussions. In practice, aligning architecture with compliance slows deployment velocity, but it forces a discipline that many teams previously ignored. During a cross-regional deployment review, I observed engineering teams struggle to balance adaptive security models with audit requirements, especially when threat models evolved faster than compliance documentation. This tension defines modern ai ethics and governance challenges across global enterprise AI solutions.

Conclusion

Winning the AI cybersecurity landscape is not a tooling problem but a systems governance problem. Based on my field exposure across multiple enterprise environments, I consistently observed that organizations investing in ai risk assessment frameworks achieved more stable long-term security outcomes than those focused purely on detection tooling. Crowdstrike AI and Darktrace cyber security only deliver strategic value when embedded into disciplined operational governance structures rather than treated as standalone products. The real advantage emerges when intelligence systems are tuned to adapt under uncertainty rather than merely react to known threats.

In practice, I have seen organizations accumulate advanced security stacks that looked impressive in architecture diagrams but failed under real-world alert pressure due to missing coordination logic. The most consistent failure pattern was not technical absence of detection, but operational inability to prioritize and act on signals in time. Mature ai ethics and governance transforms security from reactive firefighting into controlled risk shaping. This distinction ultimately determines whether enterprise AI solutions become defensive assets or just expensive monitoring layers.